I was working on a web based project recently where there was no security thought about when designing, developing it.

The following outlines my experience with retrofitting security.

It’s my hope that someone will find it useful for their own implementation.

We’ll be focussing on the client side in this post (part 1) and the server side in part 2.

We’ll also cover some preliminary discussion that will set the stage for this series.

The application consists of a WCF service delivering up content to some embedding code on any page in the browser.

The content is stored as Xml in the database and transformed into Html via Xslt.

The first activity I find useful is to go through the process of Threat Modelling the Application.

This process can be quite daunting for those new to it.

Here’s a couple of references I find quite useful to get started:

https://www.owasp.org/index.php/Application_Threat_Modeling

https://www.owasp.org/index.php/Threat_Risk_Modeling#Decompose_Application

Actually this ones not bad either.

There is no single right way to do this.

The more you read and experiment, the more equipped you will be.

The idea is to think like an attacker thinks.

This may be harder for some than others, but it is essential, to cover as many potential attack vectors as possible.

Remember, there is no secure system, just varying levels of insecurity.

It will always be much harder to discover the majority of security weaknesses in your application as the person or team creating/maintaining it,

than for the person attacking it.

The Threat Modelling topic is large and I’m not going to go into it here, other than to say, you need to go into it.

Threat Agents

Work out who your Threat Agents are likely to be.

Learn how to think like they do.

Learn what skills they have and learn the skills your self.

Sometimes the skills are very non technical.

For example walking through the door of your organisation in the weekend because the cleaners (or any one with access) forgot to lock up.

Or when the cleaners are there and the technical staff are not (which is just as easy).

It happens more often than we like to believe.

Defense in Depth

To attempt to mitigate attacks, we need to take a multi layered approach (often called defence in depth).

What made me decide to start with sanitising user input from the browser anyway?

Well according to the OWASP Top 10, Injection and Cross Site Scripting (XSS) are still the most popular techniques chosen to compromise web applications.

So it makes sense if your dealing with web apps, to target the most common techniques exploited.

Now, in regards to defence in depth when discussing web applications;

If the attacker gets past the first line of defence, there should be something stopping them at the next layer and so forth.

The aim is to stop the attack as soon as possible.

This is why we focus on the UI first, and later move our focus to the application server, then to the database.

Bear in mind though, that what ever we do on the client side, can be circumvented relatively easy.

Client side code is out of our control, so it’s best effort.

Because of this, we need to perform the following not only in the browser, but as much as possible on the server side as well.

- Minimising the attack surface

- Defining maximum field lengths (validation)

- Determining a white list of allowable characters (validation)

- Escaping untrusted data, especially where you know it’s going to endup in an execution context. Even where you don’t think this is likely, it’s still possible.

- Using Stored Procedures / parameterised queries (not covered in this series).

- Least Privilege.

Minimising the privileges assigned to every database account (not covered in this series).

Minimising the attack surface

input fields should only allow certain characters to be input.

Text input fields, textareas etc that are free form (anything is allowed) are very hard to constrain to a small white list.

input fields where ever possible should be constrained to well structured data,

like dates, social security numbers, zip codes, e-mail addresses, etc. then the developer should be able to define a very strong validation pattern, usually based on regular expressions, for validating such input. If the input field comes from a fixed set of options, like a drop down list or radio buttons, then the input needs to match exactly one of the values offered to the user in the first place.

As it was with the existing app I was working on, we had to allow just about everything in our free form text fields.

This will have to be re-designed in order to provide constrained input.

Defining maximum field lengths (validation)

This was currently being done (sometimes) in the Xml content for inputs where type="text".

Don’t worry about the inputType="single", it gets transformed.

<input id="2" inputType="single" type="text" size="10" maxlength="10" />

And if no maxlength specified in the Xml, we now specify a default of 50 in the xsl used to do the transformation.

This way we had the input where type="text" covered for the client side.

This would also have to be validated on the server side when the service received values from these inputs where type="text".

<xsl:template match="input[@inputType='single']">

<xsl:value-of select="@text" />

<input name="a{@id}" type="text" id="a{@id}" class="textareaSingle">

<xsl:attribute name="value">

<xsl:choose>

<xsl:when test="key('response', @id)">

<xsl:value-of select="key('response', @id)" />

</xsl:when>

<xsl:otherwise>

<xsl:value-of select="string(' ')" />

</xsl:otherwise>

</xsl:choose>

</xsl:attribute>

<xsl:attribute name="maxlength">

<xsl:choose>

<xsl:when test="@maxlength">

<xsl:value-of select="@maxlength"/>

</xsl:when>

<xsl:otherwise>50</xsl:otherwise>

</xsl:choose>

</xsl:attribute>

</input>

<br/>

</xsl:template>

For textareas we added maxlength validation as part of the white list validation.

See below for details.

Determining a white list of allowable characters (validation)

See bottom of this section for Update

Now this was quite an interesting exercise.

I needed to apply a white list to all characters being entered into the input fields.

A user can:

- type the characters in

- [ctrl]+[v] a clipboard full of characters in

- right click -> Paste

To cover all these scenarios as elegantly as possible, was going to be a bit of a challenge.

I looked at a few JavaScript libraries including one or two JQuery plug-ins.

None of them covered all these scenarios effectively.

I wish they did, because the solution I wasn’t totally happy with, because it required polling.

In saying that, I measured performance, and even bringing the interval right down had negligible effect, and it covered all scenarios.

setupUserInputValidation = function () {

var textAreaMaxLength = 400;

var elementsToValidate;

var whiteList = /[^A-Za-z_0-9\s.,]/g;

var elementValue = {

textarea: '',

textareaChanged: function (obj) {

var initialValue = obj.value;

var replacedValue = initialValue.replace(whiteList, "").slice(0, textAreaMaxLength);

if (replacedValue !== initialValue) {

this.textarea = replacedValue;

return true;

}

return false;

},

inputtext: '',

inputtextChanged: function (obj) {

var initialValue = obj.value;

var replacedValue = initialValue.replace(whiteList, "");

if (replacedValue !== initialValue) {

this.inputtext = replacedValue;

return true;

}

return false;

}

};

elementsToValidate = {

textareainputelements: (function () {

var elements = $('#page' + currentPage).find('textarea');

if (elements.length > 0) {

return elements;

}

return 'no elements found';

} ()),

textInputElements: (function () {

var elements = $('#page' + currentPage).find('input[type=text]');

if (elements.length > 0) {

return elements;

}

return 'no elements found';

} ())

};

// store the intervals id in outer scope so we can clear the interval when we change pages.

userInputValidationIntervalId = setInterval(function () {

var element;

// Iterate through each one and remove any characters not in the whitelist.

// Iterate through each one and trim any that are longer than textAreaMaxLength.

for (element in elementsToValidate) {

if (elementsToValidate.hasOwnProperty(element)) {

if (elementsToValidate[element] === 'no elements found')

continue;

$.each(elementsToValidate[element], function () {

$(this).attr('value', function () {

var name = $(this).prop('tagName').toLowerCase();

name = name === 'input' ? name + $(this).prop('type') : name;

if (elementValue[name + 'Changed'](this))

this.value = elementValue[name];

});

});

}

}

}, 300); // milliseconds

};

Each time we change page, we clear the interval and reset it for the new page.

clearInterval(userInputValidationIntervalId);

setupUserInputValidation();

Update 2013-06-02:

Now with HTML5 we have the pattern attribute on the input tag, which allows us to specify a regular expression that the text about to be received is checked against. We can also see it here amongst the new HTML5 attributes . If used, this can make our JavaScript white listing redundant, providing we don’t have textareas which W3C has neglected to include the new pattern attribute on. I’d love to know why?

Escaping untrusted data

Escaped data will still render in the browser properly.

Escaping simply lets the interpreter know that the data is not intended to be executed,

and thus prevents the attack.

Now what we do here is extend the String prototype with a function called htmlEscape.

if (typeof Function.prototype.method !== "function") {

Function.prototype.method = function (name, func) {

this.prototype[name] = func;

return this;

};

}

String.method('htmlEscape', function () {

// Escape the following characters with HTML entity encoding to prevent switching into any execution context,

// such as script, style, or event handlers.

// Using hex entities is recommended in the spec.

// In addition to the 5 characters significant in XML (&, <, >, ", '), the forward slash is included as it helps to end an HTML entity.

var character = {

'&': '&',

'<': '<',

'>': '>',

'"': '"',

// Double escape character entity references.

// Why?

// The XmlTextReader that is setup in XmlDocument.LoadXml on the service considers the character entity references () to be the character they represent.

// All XML is converted to unicode on reading and any such entities are removed in favor of the unicode character they represent.

// So we double escape character entity references.

// These now get read to the XmlDocument and saved to the database as double encoded Html entities.

// Now when these values are pulled from the database and sent to the browser, it decodes the & and displays #x27; and/or #x2F.

// This isn't what we want to see in the browser.

"'": '&#x27;', // ' is not recommended

'/': '&#x2F;' // forward slash is included as it helps end an HTML entity

};

return function () {

return this.replace(/[&<>"'/]/g, function (c) {

return character[c];

});

};

}());

This allows us to, well, html escape our strings.

element.value.htmlEscape();

In looking through here,

The only untrusted data we are capturing is going to be inserted into an Html element

tag by way of insertion into a

textarea element,

or the attribute value of

input elements where

type="text".

I initially thought I’d have to:

- Html escape the untrusted data which is only being captured from

textarea elements.

- Attribute escape the untrusted data which is being captured from the value attribute of

input elements where type="text".

RULE #2 – Attribute Escape Before Inserting Untrusted Data into HTML Common Attributes of here,

mentions

“Properly quoted attributes can only be escaped with the corresponding quote.”

So I decided to test it.

Created a collection of injection attacks. None of which worked.

Turned out we only needed to Html escape for the untrusted data that was going to be inserted into the textarea element.

More on this in a bit.

Now in regards to the code comments in the above code around having to double escape character entity references;

Because we’re sending the strings to the browser, it’s easiest to single decode the double encoded Html on the service side only.

Now because we’re still focused on the client side sanitisation,

and we are going to shift our focus soon to making sure we cover the server side,

we know we’re going to have to create some sanitisation routines for our .NET service.

Because the routines are quite likely going to be static, and we’re pretty much just dealing with strings,

lets create an extensions class in a new project in a common library we’ve already got.

This will allow us to get the widest use out of our sanitisation routines.

It also allows us to wrap any existing libraries or parts of them that we want to get use of.

namespace My.Common.Security.Encoding

{

/// <summary>

/// Provides a series of extension methods that perform sanitisation.

/// Escaping, unescaping, etc.

/// Usually targeted at user input, to help defend against the likes of XSS attacks.

/// </summary>

public static class Extensions

{

/// <summary>

/// Returns a new string in which all occurrences of a double escaped html character (that's an html entity immediatly prefixed with another html entity)

/// in the current instance are replaced with the single escaped character.

/// </summary>

///

/// The new string.

public static string SingleDecodeDoubleEncodedHtml(this string source)

{

return source.Replace("&#x", "&#x");

}

}

}

Now when we run our xslt transformation on the service, we chain our new extension method on the end.

Which gives us back a single encoded string that the browser is happy to display as the decoded value.

return Transform().SingleDecodeDoubleEncodedHtml();

Now back to my findings from the test above.

Turns out that “Properly quoted attributes can only be escaped with the corresponding quote.” really is true.

I thought that if I entered something like the following into the attribute value of an input element where type="text",

then the first double quote would be interpreted as the corresponding quote,

and the end double quote would be interpreted as the end quote of the onmouseover attribute value.

" onmouseover="alert(2)

What actually happens, is during the transform…

xslCompiledTransform.Transform(xmlNodeReader, args, writer, new XmlUrlResolver());

All the relevant double quotes are converted to the double quote Html entity ‘”‘ without the single quotes.

And all double quotes are being stored in the database as the character value.

Libraries and useful code

Microsoft Anti-Cross Site Scripting Library

OWASP Encoding Project

This is the Reform library. Supports Perl, Python, PHP, JavaScript, ASP, Java, .NET

Online escape tool supporting Html escape/unescape, Java, .NET, JavaScript

The characters that need escaping for inserting untrusted data into Html element content

JavaScript The Good Parts: pg 90 has a nice ‘entityify’ function

OWASP Enterprise Security API Used for JavaScript escaping (ESAPI4JS)

JQuery plugin

Changing encoding on html page

Cheat Sheets and Check Lists I found helpful

https://www.owasp.org/index.php/Input_Validation_Cheat_Sheet

https://www.owasp.org/index.php/OWASP_Validation_Regex_Repository

https://www.owasp.org/index.php/XSS_(Cross_Site_Scripting)_Prevention_Cheat_Sheet

https://www.owasp.org/index.php/DOM_based_XSS_Prevention_Cheat_Sheet

https://www.owasp.org/index.php/OWASP_AJAX_Security_Guidelines

If any of this is unclear, let me know and I’ll do my best to clarify. Maybe you have suggestions of how this could have been improved? Let’s spark a discussion.

Tags:info sec, injection, Security, sql injection, threat modeling, XSRF, XSS

Posted in Architecture, C#, JavaScript, Networking, Scripting, Security, Web | 2 Comments »

December 27, 2011

I recently acquired a new second hand Asus laptop from my work,

that will be performing a handful of responsibilities on one of my networks.

This is the process I took to set up OpenSSH on Cygwin running on the Windows 7 box.

I won’t be going over the steps to tunnel RDP as I’ve already done this in another post

Make sure your LAN Manager Authentication Level is set as high as practical.

Keeping in mind, that some networked printers using SMB may struggle with these permissions set to high.

- Windows Firewall -> Allowed Programs -> checked Remote Desktop.

- System Properties -> Remote tab -> turn radio button on to at least “Allow connections from computers running any version of Remote Desktop”

If you like, this can be turned off once SSH is set-up, or you can just turn the firewall rule off that lets RDP in.

CopSSH which I used on my last set of Linux to Windows RDP via SSH set-ups is no longer free.

So I’m not paying for something I can get for free, but with a little extra work involved.

So I looked at some other Windows SSH offerings

- freeSSHd which looked like a simple set-up, but it didn’t appear to be currently maintained.

- OpenSSH the current latest version of 5.9 released September 6, 2011

A while back OpenSSH wasn’t being maintained. Looks like that’s changed.

OpenSSH is part of Cygwin, so you need to create a

c:\cygwin directory and download setup.exe into it.

- Right click on c:\cygwin\setup.exe and select “Run as Administrator”.

Click Next.

- If Install from Internet is not checked, check it. Then click Next.

- Accept the default “Root Directory” of C:\cygwin. Accept the default for “Install For” as All Users.

- Accept the default “Local Package Directory” of C:\cygwin.

- Accept the default “Select Your Internet Connection” of “Direct Connection”. Click Next.

- Select the closest mirror to you. Click Next.

- You can expand the list by clicking the View button, or just expand the Net node.

- Find openssh and click the Skip text, so that the Bin check box for the item is on.

- Find tcp_wrappers and click the Skip text, so that the Bin check box for the item is on.

If you selected tcp_wrappers and get the “ssh-exchange-identification: Connection closed by remote host” error,

you’ll need to edit /etc/hosts.allow and add the following two lines before the PARANOID line.

ALL: 127.0.0.1/32 : allow

ALL: [::1]/128: allow

These lines were already in the /etc/hosts.allow

(optional) find the package “diffutils”, click on the word “skip” so that an x appears in Column B,

find the package “zlib”, click on the word “skip” (it should be already selected) so that an x appears in Column B.

Click Next to start the install.

Click Next again to… Resolving Dependencies, keep default “Select required packages…” checked.

At the end of the install, I got the “Program compatibility Assistant” stating… This program might not have installed correctly.

I clicked This program installed correctly.

Add an environment variable to your Systems Path variable.

Edit the Path and append ;c:\cygwin\bin

Right click the new Cygwin Terminal shortcut and Run as administrator.

Make sure the following files have the correct permissions.

/etc/passwd -rw-r–r–

/etc/group -rw-r–r–

/var drwxr-xr-x

Create a sshd.log file in /var/log/

touch /var/log/sshd.log

chmod 664 /var/log/sshd.log

Run ssh-host-config

- Cygwin will then ask Should privilege separation be used? Answer Yes

- Cygwin will then ask Should this script create a local user ‘sshd’ on this machine? Answer Yes

- Cygwin will then ask Do you want to install sshd as service? Answer Yes

- Cygwin will then ask for the value of CYGWIN for the daemon: []? Answer ntsec tty

- Cygwin will then ask Do you want to use a different name? Answer no

- Cygwin will then ask Please enter a password for new user cyg_server? Enter a password twice and remember it.

replicate your Windows user credentials with cygwin

mkpasswd -cl > /etc/passwd

mkgroup --local > /etc/group

I think (although I haven’t tried it yet) when you change your user password, which you should do regularly,

you should be able to run the above 2 commands again to update your password.

As I haven’t done this yet, I would take a backup of these files before I ran the commands.

to start the service, type the following:

net start sshd

Test SSH

ssh localhost

When you make changes to the /etc/sshd_config,

because it’s owned by cyg_server, you’ll need to make any changes as the owner.

I added the following line to the end of the file:

Ciphers blowfish-cbc,aes128-cbc,3des-cbc

As it sounds like Blowfish runs faster than the default AES-128

There are also a collection of changes to be made to the /etc/sshd_config

for example:

- Change the LoginGraceTime to as small as possible number.

- PermitRootLogin no

- Set PasswordAuthentication to no once you get key pair auth set-up.

- PermitEmptyPasswords no

- You can also setup AllowUsers and DenyUsers.

The options available are here in the man page (link updated 2013-10-06).

This is also helpful, I used this for my CopSSH setup.

Open firewalls TCP port 22 and close the RDP port once SSH is working.

As my blog post says:

ssh-copy-id MyUserName@MyWindows7Box

I already had a key pair with pass phrase, so I used that.

Now we should be able to ssh without being prompted for a password, but instead using key pair auth.

The links I found helpful:

http://pigtail.net/LRP/printsrv/cygwin-sshd.html

http://www.petri.co.il/setup-ssh-server-vista.htm

http://www.scottmurphy.info/open-ssh-server-sshd-cygwin-windows

Tags:Linux, Networking, OpenSSH, Security, SSH

Posted in GNU/Linux, Microsoft, Networking, Security, SSH | 2 Comments »

June 16, 2011

Part one of a three part series

on Setting up a UPS solution, to enable clean shutdown of vital network components.

This post is essentially about setting up a Smart-UPS and it’s NMC (Network Management Card),

as the project I embarked upon was a little large for a single post.

Christchurch NZ used to have quite stable power,

but recent earthquakes we’ve been having have changed that.

Now we endure very unstable power.

This fact,

along with the fact that if my RAID arrays were being written to when a power outage occurred,

prompted me to get my A into G on this project.

For a while now I’ve been looking into setting up a UPS solution to support my critical servers.

I already had a couple of UPS’s

Liebert PowerSure 250 VA

Eaton Powerware 5110 500 VA

Both of which were a bit small to support a fairly hungry hypervisor, dedicated file server, 24 port Cisco catalyst switch and a home made router.

Also the FreeNAS (BSD ) driver for USB that was supposed to work with the 5110, didn’t seem to.

In considering the above; I had a couple of options.

With ESXi we can use an APC UPS and a network management card or the Powerware 5110 connected to a network USB hub

and a virtual guest listening to its events, ready to issue shutdown procedures as per James Pearce’s solution

but to any number of machines.

What I wanted was a single UPS plugged into a single box that would receive on battery events and do the work of shutting down the various machines listed (any type of machine, including virtual hosts and guests).

There didn’t appear to be a single piece of software that would do this, so I wrote it.

I’ll go over this in a latter post.

So I would need either a network connected USB hub. As explained here.

Simple Two Port Network Connected USB Hub

Hardware solutions and all work well from VM guests from what I’ve read.

AnywhereUSB from Digi …

USB server from Keyspan

USB Anywhere from Belkin

Software solutions, Need physical PC that has USB device/s plugged in.

USB@nywhere …

USB over Network …

USB Redirector

Or a network management card (something like the AP9606) for the UPS as explained here by James

Powerware 5110 doesn’t support a network management card, so only option I see for this UPS is a network connected USB hub.

APC SMART-UPS supports network management cards and I think these would be the best option for this UPS.

The AP9606

It was starting to look like an APC UPS would be the better option.

I had already been looking for one of these for quite a while, and I missed a couple of them.

The one I eventually picked up

APC Smart-UPS

Second hand APC Smart-UPS 1500

$200 + shipping = just under $300.

AP9606 NMC $50 + shipping = aprx $80.

So for $380 even if I needed a new battery ($250),

I still had a $1300 UPS, NMC not included, for $600.

Turned out the battery was fine,

so all up $380 to support a bunch of hardware.

You’ll need to give the card an IPv4 address that suites your subnet.

As my card was second hand, it already had one,

but I didn’t know what it was.

In order to give the card an IP, you have 2 obvious options

1. serial cable and terminal emulator

2. Ethernet and ARP

As I didn’t have the “special” serial cable,

I decided to go the Ethernet route.

I would need the MAC address.

The subnet mask and default gateway also need to be set up.

Pg 11 of APC_ap9606_installation_guide.pdf goes through the procedure.

All APC devices have a MAC address that begin with 00 C0 B7

Although my network management card had a sticker with the MAC address on it.

“You may want to check your DHCP client list for any MAC addresses beginning with 00 C0 B7,

which indicates an APC address.

In addition, check the card you are trying to configure.

Any card with valid IP settings will have a solid green status LED”.

When I received my AP9606 Web SNMP Management Card, I didn’t have a clue what the IP address had been set to.

If it was a new card it wouldn’t have yet been set and I would be able to easily set it without having to workout

what its subnet was.

On Pg 11 of the “Web/SNMP Management Card Installation Manual”

It goes through setting up an IP from scratch using ARP.

So I plugged my notebook into the AP9606’s Ethernet port and spun up Wireshark.

What you’ll generally be looking for is a record with the Source looking like

"American_[last 3 bytes of MAC]"

Time Source Destination Protocol Info

231 715.948894 American_42:6f:b1 Broadcast ARP Who has 10.1.80.3? Tell 10.1.80.222

And an ARP request that looks something like the following…

The first 3 bytes of the MAC will always be 00-C0-B7 for a AP9606.

Address Resolution Protocol (request)

Sender MAC address: American_42:6f:b1 (00:c0:b7:[3 more octets here])

Sender IP address: 10.1.80.222 (10.1.80.222)

Target MAC address: American_42:6f:b1 (00:c0:b7:[3 more octets here])

Target IP address: 10.1.80.3 (10.1.80.3)

I set the notebook to use a static IP of

10.1.80.2/24

and default gateway to the Target IP of

10.1.30.3

You may have to play around a bit with the subnet mask until you get it right.

I was just lucky.

Then tried using ARP to assign the new IP address,

but it wasn’t sticking.

So I tried to telnet in and was prompted for a username and password.

The default of apc for both was incorrect so obviously it had already been altered.

There is also another account of u- User p- apc

but this didn’t exist or had been changed.

So I contacted APC for the backdoor account as discussed here

and was directed to here.

This is no good unless you have a special serial cable which I didn’t.

I asked for the pin layout of the cable and was told,

that they make them,

but don’t know what the pin layout is.

The nice fellow at APC support directed me to a cable to buy.

A little pricey at $100NZ,

for a single use cable.

There is no proper way to reset the password by the Ethernet interface.

This left me with two obvious options.

1. Make up a serial cable with I believe…

Pin#2 Female to Pin#2 Male,

Pin#3 Female to Pin#1 Male,

Pin#5 Female to Pin#9 Male,

and find a computer with a com port.

Layout info found here

It was correct.

That would cost next to nothing.

2. just crack the credentials with one of these.

The second seemed like it would be the path of least resistance immediately (this turned out to be incorrect),

as I had the software, but not enough parts for a serial cable.

THC-Hydra seemed like a good option.

Once I downloaded and ran Hydra, I received the following error

5 [main] ? (1988) C:\cygwin\bin\bash.exe: *** fatal error - system shared

memory version mismatch detected - 0x75BE0074/0x75BE0096.

This problem is probably due to using incompatible versions of the cygwin DLL.

Search for cygwin1.dll using the Windows Start->Find/Search facility

and delete all but the most recent version. The most recent version *should*

reside in x:\cygwin\bin, where 'x' is the drive on which you have

installed the cygwin distribution. Rebooting is also suggested if you

are unable to find another cygwin DLL

This error is due to having incompatible versions of cygwin1.dll on your system.

So did a search for them and found that my SSH install had an older version of cygwin1.dll.

So renamed it,

and still had problems,

rebooted, and all was good.

the only cygwin1.dll should be in the same directory that hydra.exe is run from.

How to use THC-Hydra

Some good references here…

http://www.youtube.com/watch?v=kzJFPduiIsI

http://www.pauldotcom.com/2007/03/01/password_cracking_with_thchydr.html

Command I used.

C:\hydra-5.4-win>hydra -L logins.txt -P passwords.txt -e n -e s -o hydraoutput.txt -v 10.1.80.222 telnet "Welcome hacker"

I got a false positive of User name n/a Password steven

So rather than spend more time on populating the logins.txt and passwords.txt.

I decided to try the serial cable route

As it turned out, I wouldn’t have guessed the username,

found this out once I logged on using the serial interface.

This is the pinout I used.

This is the single use cable I made.

Total cost of $0.00

Make sure you’re all plugged in.

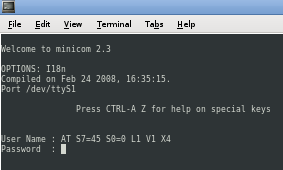

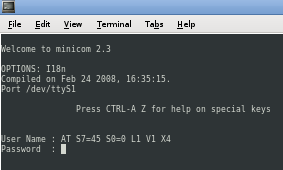

I used minicom as my terminal emulator to connect to the UPS’s com port.

Installation and usage details here.

You need to make sure you’re serial port/s are on in the BIOS.

I didn’t check mine, but they were on.

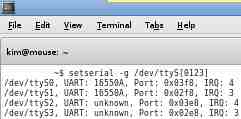

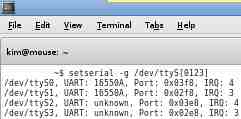

Need to make sure Linux knows about your serial port/s

Run the following command:

Use setserial to provide the configuration information associated with your serial ports.

Configuring your serial ports.

To setup your terminal emulator (minicom in my case):

$ minicom -s -c on

Choose “Serial port setup”

and you will be presented with a menu like the following.

This is where you get to set the following:

2400 BPS, 8 databits, No parity,

one stop bit and flow control is set to none.

Then select Save setup as dfl

Exit.

You should now be prompted for authentication from the Smart-Ups.

Or you can choose “Exit from Minicom” and run

$ minicom -c on

later.

If you get output like…

Device /dev/ttyS[number of your port here] is locked.

You’ll have to

# rm /var/tmp/LOCK..ttyS[number of your port here]

Now is where you get to log on as the default user/pass apc/apc

Press the reset button on the AP9606

and press Enter key,

then repeatedly if necessary.

This is poking the AP9606 in order to get a login prompt

Once you get the User Name,

you can enter the “apc” user (without the quotes) and then for the Password,

“apc” (without the quotes).

You have a 30 second window here to login.

Else you have to repeat the reset process and try again.

From the Control Console menu,

select System, then User Manager.

Select Administrator,

and change the User Name and Password settings,

both of which are currently apc.

I also changed the IP settings.

From the Control Console,

select

2- Network

1- TCP/IP

and change your IP settings.

There are quite a few settings you can change on the card,

you should just be able to follow your nose from here.

You’ll also want to make sure the Web Access is Enabled.

Take note of the port also, usually 8000.

Changing the password via the serial interface is also detailed here.

This post was also quite helpful.

Changed the IP settings back to how they were on my notebook.

Could now connect via telnet and HTTP.

Turned md5 on to try and boost the security of passing credentials to the web UI.

Turned out the jre is also needed for this.

Went through that process and it was looking promising,

but the web UI no longer accepted my password.

Not sure why this is,

but it means if you want to be secure when you log into the web UI,

you are going to have to plug your Ethernet cable directly into the AP9606.

Otherwise your passing credentials in plan text.

Upgrade of firmware

The latest firmware is found here.

Directions on upgrading are found here.

In saying that, APC recommended I use the earlier aos325.bin and sumx326.bin from here if using Windows XP.

Some details around the firmware required for the different management card types for use in a Smart Slot equipped APC UPS

The firmware version is found under Help->About System on the NMC’s Web interface.

Posted in Networking, Projects, Security, UPS | 1 Comment »

June 6, 2011

If you like the idea of

- Removing annoying adds while browsing the web

- Minimising the likelihood of having your privacy compromised, by way of spy-ware, unwanted analytics, Cross-Site Scripting (XSS), and others

- Gaining control over who can download what

- Monitoring what exactly is being downloaded or even attempted

Keep reading, if you’d like to know the process I took to acquire the above.

hosts file

Most/all Operating Systems have a hosts file.

You can add all the dodgy domains you want blocked, to your hosts file and direct them to localhost.

Example of hosts file with blocked domains

Providing your hosts file is kept up to date.

This is one alternative to blocking these domains.

Example host files

http://hostsfile.mine.nu/downloads/

http://winhelp2002.mvps.org/hosts.htm

http://someonewhocares.org/hosts/

On some systems if you add the dodgy sites to your hosts file, you may experience the “waiting for the ad server” problem.

As far as your browser is concerned, these URL’s don’t exist (because it’s looking at localhost).

Your browser may wait for a timeout for the blocked server.

In this case you could use eDexter to serve up a local image instead of waiting for a server timeout.

At this time, only OS X and Windows versions are available.

There is an alternative.

JavaDog will apparently run on all platforms that have the Java VM.

This doesn’t appear to be in the Debian repositories. At least not the ones I’m using.

I read here “As for Edexter, Firefox in Linux doesn’t seem to have the “waiting for the ad server” problem Mozilla in windows had.”

From my experience it does.

I had a quick look at JavaDog for Linux.

Found this site

It can be an administrative pain to keep the hosts file up to date with the additions and removals of domains.

Although Linux users could use the script here to do the updating.

This could be added to a Cron job in Linux.

If your on a windows box you may run into another type of slow down every 25 minutes for 5 minutes with apparently 100% CPU usage resulting in the described DNS cache timeout error.

There is a workaround, but I wouldn’t be very happy with it. Disabling the DNS client service.

If you rely on Network Discovery (enables you to see other computers on your network and for them to see you), this is not going to be a solution.

As stated here

A better Win7/Vista workaround would be to add two Registry entries to control the amount of time the DNS cache is saved.

- Flush the existing DNS cache (see above)

- Start > Run (type) regedit

- Navigate to the following location:

HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\Dnscache\Parameters

- Click Edit > New > DWORD Value (type) MaxCacheTtl

- Click Edit > New > DWORD Value (type) MaxNegativeCacheTtl

- Next right-click on the MaxCacheTtl entry (right pane) and select: Modify and change the value to 1

- The MaxNegativeCacheTtl entry should already have a value of 0 (leave it that way – see screenshot)

- Close Regedit and reboot …

- As usual you should always backup your Registry before editing … see Regedit Help under “Exporting Registry files”

If you decide to give the hosts file a go

On Linux it’s found in /etc

On Windows it’s location is defined by the following registry key

HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\Tcpip\Parameters\DataBasePath

Usually here

Windows 7/Vista/XP = C:\WINDOWS\SYSTEM32\DRIVERS\ETC

Windows 2K = C:\WINNT\SYSTEM32\DRIVERS\ETC

Make sure you backup the hosts file in case anything goes wrong.

Make sure you don’t remove what’s already in your default hosts file. especially the first line that has the loop back address

127.0.0.1 localhost

127.0.1.1 [MyComputerName].local [MyComputerName]

Just add the new entries at the bottom of the hosts file.

Remove any duplicate entries.

You will then have to flush your DNS cache if you have one.

If your on windows

Clear your browsers cache.

Close all browsers.

From a cmd prompt run the following

ipconfig /flushdns

or reboot the machine.

If your on Linux (Debian)

Clear your browsers cache.

That may be all you need to do.

Otherwise

At the command prompt (as root) try

/etc/init.d/nscd restart

or for other Linux distros

“killall -hup inetd” (without the quotes) which will restart the inetd process and should not require a reboot.

I found that just updating the file was enough to see the changes,

as my default Debian Lenny install doesn’t have a DNS cache.

Adblock Plus

I decided to just give the Firefox add-on Adblock Plus a try

as I thought it would be allot easier and less (zero) administrative overhead.

Just make sure you’ve got a good filter subscription selected. I used EasyList (English).

As I was on Lenny. Adblock Plus wasn’t available for Iceweasel (firefox on debian) 3.0.6 unless I installed the later version of Iceweasel from the backports.debian.org repository.

I looked in the Tools->Add-ons->Get Add-ons and searched for Adblock Plus.

I was planning on performing a re-install of Debian testing soon anyway, but was keen on giving Adblock Plus a try now.

Installing Iceweasel (firefox) from backports

Most won’t have to do this, but I’m still on old stable.

This site is quite helpful

For most people they will just have to make a change to their /etc/apt/sources.list

If you are running Debian Lenny you would have to add the following line:

deb http://backports.debian.org/debian-backports lenny-backports main contrib non-free

For later versions of Debian substitute the version specific part with your versions code name.

As I’m using apt-proxy to cache my packages network wide, I had to make sure I had the following section in the /etc/apt-proxy/apt-proxy-v2.conf file

[backports]

;; backports

backends = http://backports.debian.org/debian-backports

min_refresh_delay = 1d

and the following in the client pc’s /etc/apt/sources.list

deb http://[MyAptProxyServer]:[MyAptProxyServersListeningPort]/backports lenny-backports main contrib non-free

You can see how the directory structure works for the repositories.

In this case have a look at http://backports.debian.org/debian-backports/

in dists you will see lenny-backports as a subdirectory.

Within lenny-backports you’ll see main, contrib and non-free

Now just add the below section to the client pc’s /etc/apt/preferences file

In my case I didn’t have this file, so created it.

What’s this for?

If a package was installed from Backports and there is a newer version there,

it will be upgraded from there.

Other packages that are also available from Backports will not be upgraded to the Backports version unless explicitly stated with

-t lenny-backports

Check the apt_preferences man page as usual for in depth details.

# APT PINNING PREFERENCES

Package: *

Pin: release a=lenny-backports

Pin-Priority: 200

Now as root

apt-get update

apt-get -t lenny-backports install iceweasel

Now because we’ve added the /etc/apt/preferences file,

when ever there are updates to the backported version of iceweasel,

we’ll get them for Iceweasel when we do a

apt-get upgrade

Now through iceweasel’s Tools->Add-ons->Get Add-ons

and a search for Adblock Plus now revealed the plugin.

Installed it and selected the EasyList (English) filter subscription.

Browsed some sites I knew there were popups and ads I didn’t want and it worked great!

Adblock Plus gives good visibility for each request made,

as to what it’s blocking, could possibly block etc, through it’s Close blockable items menu Ctrl+Shift+V

So personally I think I’d stick with the add-on (for firefox users that is) going forward, as it seemed like it just worked.

Not sure about other browser platforms.

Now I use this with the NoScript pluggin also,

which I find great at stopping javascript, flash and other executable code from being run from domains I’m not expecting it to be run from.

I’m also using OpenDNS as name servers.

They provide allot of control over what can be accessed by way of domain.

You can also provide custom images and messages to be displayed for requested sites that you don’t want to allow.

Statistics of who on your network is accessing which sites and which sites they are attempting to access.

Plus allot more.

I’m looking into using

Squid with

Snort or

Privoxy

and to take care of allot more.

Provide anonymous web browsing.

Content caching.

Resources

http://hostsfile.mine.nu/

http://winhelp2002.mvps.org/hosts.htm

http://www.accs-net.com/hosts/hostsforlinux.html

There is also a good pod-cast on the hosts file by Xoke here.

Posted in GNU/Linux, Microsoft, Networking, Security | 1 Comment »