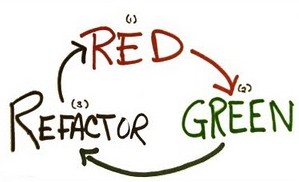

My last employers software development team recently took up the challenge of writing their tests before writing the functionality for which the test was written. In software development, this is known as Test Driven Development or TDD.

TDD is a hard concept to get developers to embrace. It’s often as much of a paradigm shift as persuading a procedural programmer to start creating Object Oriented designs. Some never get it. Fortunately we had a very talented bunch of developers, and they’ve taken to it like fish to water.

The first thing to clear up is that TDD is not primarily about testing, but rather it forces the developer to write code that is testable (the fact the code has tests written for it and running regularly is a side effect, albeit a very positive one).

This is why there is often some confusion about TDD and the fact it or its derivatives (BDD, ATDD, AAT, etc.) are primarily focused on creating well designed software. Code that is testable must be modular, which provides good separation of concerns.

- Testing is about measuring where the quality is currently at.

- TDD and its derivatives are about building the quality in from the start.

TDD concentrates on writing a unit test for the routine we are about to create before it’s created. A developer writes code that acts as a low-level specification (the test) that will be run on the routine, to confirm that the routine does what we expect it will do.

To unit test a routine, we must be able to break out the routines dependencies and separate them. If we don’t do this, the hierarchy of calls often grows exponentially.

Thus:

- We end up testing far more than we want or need to.

- The complexity gets out of hand.

- The test takes longer to execute than it needs to.

- Thus, the tests don’t get run as often as they should because we developers have to wait, and we live in an instant society.

This allows us to ignore how the dependencies behave and concentrate on a single routine. There are a number of concepts we can instantiate to help with this.

We can use:

- Mocks and Stubs

- Fakes

- Dummies

- Shims

Although TDD isn’t primarily about testing, its sole purpose is to create solid, well designed, extensible and scalable software. TDD encourages and in some cases forces us down the path of the SOLID principles, because to test each routine, each routine must be able to stand on its own.

SOLID principles

So what does SOLID give us? SOLID stands for:

- Single Responsibility Principle

- Open Closed Principle

- Liskov Substitution Principle

- Interface Segregation Principle

- Dependency Inversion Principle

Single Responsibility Principle

- Each class should have one and only one reason to change.

- Each class should do one thing and do it well.

Just because you can, doesn’t mean you should.

Open Closed Principle

- A class’s behaviour should be able to be extended without modifying it.

- There are several ways to achieve this. Some of which are polymorphism via inheritance, aggregation, wrapping.

Liskov Substitution Principle

- Please check out my posts on this topic

Interface Segregation Principle

- When an interface consists of too many members, it should be split into smaller and more specific (to the client’s needs) interfaces, so that clients using the interface only use the members applicable to them.

- A client should not have to know about all the extra interface members they don’t use.

- This encourages systems to be decoupled and thus more easily re-factored, extended and scaled.

Dependency Inversion Principle

- Often implemented in the form of the Dependency Injection Pattern via the more specific Inversion of Control Principle (IoC). In some circles, known as the Hollywood Principle… Don’t call us, we’ll call you.

TDD assists in the monitoring of technical debt and streamlines the path to quality design.

Additional info on optimizing your team’s testing effort can be found here.