When it comes to measuring the security posture of an application or network, the best defence against an attacker is offence. What does that mean? It means your best defence is to have someone with your best interests (generally employed by you), if we’re talking about your asset, assess the vulnerabilities of your asset and attempt to exploit them.

In the words of Offensive Security (Creators of Kali Linux), Kali Linux is an advanced Penetration Testing and Security Auditing Linux distribution. For those that are familiar with BackTrack, basically Kali is a new creation based on Debian rather than Ubuntu, with significant improvements over BackTrack.

When it comes to actually getting Kali on some hardware, there is a multitude of options available.

All externally listening services by default are disabled, but very easy to turn on if/when required. The idea being to reduce chances of detecting the presence of Kali.

I’ve found the Kali Linux documentation to be of a high standard and plentiful.

In this article I’ll go over getting Kali Linux installed and set-up. I’ll go over a few of the packages in a low level of detail (due to the share number of them) that come out of the box. On top of that I’ll also go over a few programmes I like to install separately. In a subsequent article I’d like to continue with additional programmes that come with Kali Linux as there are just to many to cover in one go.

System Requirements

- Minimum of 8 GB disk space is required for the Kali install

- Minimum RAM 512 MB

- CD/DVD Drive or USB boot support

Supported Hardware

Officially supported architectures

i386, amd64, ARM (armel and armhf)

Unofficial (but maintained) images

You can download official Kali Linux images for the following, these are maintained on a best effort basis by Offensive Security.

- VMware (pre-made vm with VMware tools installed)

ARM images

- rk3306 mk/ss808CPU: dual-core 1.6 GHz A9

RAM: 1 GB

- Raspberry Pi

- ODROID U2CPU: quad-core 1.7 GHz

RAM: 2GB

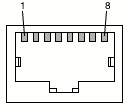

Ethernet: 10/100Mbps

- ODROID X2CPU: quad-core Cortex-A9 MPCore

RAM: 2GB

USB 2: 6 ports

Ethernet: 10/100Mbps

- MK802/MK802 II

- Samsung Chromebook

- Galaxy Note 10.1

- CuBox

- Efika MX

- BeagleBone Black

Create a Customised Kali Image

Kali also provides a simple way to create your own ISO image from the latest source. You can include the packages you want and exclude the ones you don’t. You can customise the kernel. The options are virtually limitless.

The default desktop environment is Gnome, but Kali also provides an easy way to configure which desktop environment you use before building your custom ISO image.

The alternative options provided are: KDE, LXDE, XFCE, I3WM and MATE.

Kali has really embraced the Debian ethos of being able to be run on pretty well any hardware with extreme flexibility. This is great to see.

Installation

You should find most if not all of what you need here. Just follow the links specific to your requirements.

As with BackTrack, the default user is “root” without the quotes. If your installing, make sure you use a decent password. Not a dictionary word or similar. It’s generally a good idea to use a mix of upper case, lower case characters, numbers and special characters and of a decent length.

I’m not going to repeat what’s already documented on the Kali site, as I think they’ve done a pretty good job of it already, but I will go over some things that I think may not be 100% clear at first attempt. Also just to be clear, I’ve done this on a Linux box.

Now once you have down loaded the image that suites your target platform,

you’re going to want to check its validity by verifying the SHA1 checksums. Now this is where the instructions can be a little confusing. You’ll need to make sure that the SHA1SUMS file that contains the specific checksum you’re going to use to verify the checksum of the image you downloaded, is in fact the authentic SHA1SUMS file. instructions say “When you download an image, be sure to download the SHA1SUMS and SHA1SUMS.gpg files that are next to the downloaded image (i.e. in the same directory on the server).”. You’ve got to read between the lines a bit here. A little further down the page has the key to where these files are. It’s buried in a wget command. Plus you have to add another directory to find them. The location was here. Now that you’ve got these two files downloaded in the same directory, verify the SHA1SUMS.gpg signature as follows:

$ gpg --verify SHA1SUMS.gpg SHA1SUMS

gpg: Signature made Thu 25 Jul 2013 08:05:16 NZST using RSA key ID 7D8D0BF6

gpg: Good signature from "Kali Linux Repository <devel@kali.org>

You’ll also get a warning about the key not being certified with a trusted signature.

Now verify the checksum of the image you downloaded with the checksum within the (authentic) SHA1SUMS file

Compare the output of the following two commands. They should be the same.

# Calculate the checksum of your downloaded image file.

$ sha1sum [name of your downloaded image file]

# Print the checksum from the SHA1SUMS file for your specific downloaded image file name.

$ grep [name of your downloaded image file] SHA1SUMS

Kali also has a live USB Install including persistence to your USB drive.

IRC: #kali-linux on FreeNode. Stick to the rules.

What’s Included

> 300 security programmes packaged with the operating system:

Before installation you can view the tools included in the Kali repository.

Or once installed by issuing the following command:

# prints complete list of installed packages.

dpkg --get-selections | less

To find out a little more about the application:

dpkg-query -l '*[some text you think may exist in the package name]*'

Or if you know the package name your after:

dpkg -l [package name]

Want more info still?

man [package name]

Some of the notable applications installed by default

Framework that provides the infrastructure to create, re-use and automate a wide variety of exploitation tasks.

If you require database support for Metasploit, start the postgresql service.

# I like to see the ports that get opened, so I run ss -ant before and after starting the services.

ss -ant

service postgresql start

ss -ant

ss or “socket statistics” which is a new replacement programme for the old netstat command. ss gets its information from kernel space via Netlink.

Start the Metasploit service:

ss -ant

service metasploit start

ss -ant

When you start the metasploit service, it will create a database and user, both with the names msf3, providing you have your database service started. Now you can run msfconsole.

Start msfconsole:

msfconsole

The following is an image of terminator where I use the top pane for stopping/starting services, middle pane for checking which ports are opened/closed, bottom pane for running msfconsole. terminator is not installed by default. It’s as simple as apt-get install terminator

You can find full details of setting up Metasploits database and start/stopping the services here.

You can also find the Metasploit frameworks database commands simply by typing help database at the msf prompt.

# Print the switches that you can run msfconsole with.

msfconsole -h

Once your in msf type help at the prompt to get yourself started.

There is also a really easy to navigate all encompassing set of documentation provided for msfconsole here.

You can also set-up PostgreSQL and Metasploit to launch on start-up like this:

update-rc.d postgresql enable

update-rc.d metasploit enable

Offensive Security also has a Metasploit online course here.

Armitage

Just as it was included in BackTrack, which is no longer supporting Armitage, you’ll also find Armitage comes installed out of the box in version 1.0.4 of Kali Linux. Armitage is a GUI to assist in metasploit visualisation. You can find the official documentation here. Offensive Security has also done a good job of providing their own documentation for Armitage over here. To get started with Armitage, just make sure you’ve got the postgresql service running. Armitage will start the metasploit service for you if it’s not already running. Armitage allows your red team to collaborate by using a single instance of Metasploit. There is also a commercial offering developed by Raphael Mudge’s company “Strategic Cyber LLC” which also created Armitage, called Cobalt Strike. Cobalt Strike currently costs $2500 per user per year. There is a 21 day trial though. Cobalt Strike offers a bunch of great features. Check them out here. Armitage can connect to an existing instance of Metasploit on another host.

NMap

Target use is network discovery and auditing. Provides host information for anything it can access from a network. Also now has a scripting engine that can execute arbitrary custom tasks.

I’m guessing we’ve probably all used NMap? ZenMap which Kali Linux also provides out of the box Is a gui for NMap. This was also included in BackTrack.

Intercepting Web Proxies

Burp Suite

I use burp quite regularly and have a few blog posts where I’ve detailed some of it’s use. In fact I’ve used it to reverse engineer the comms between VMware vSphere and ESXi to create a UPS solution that deals with not only virtual hosts but also the clients.

WebScarab

I haven’t really found out what webscarab’s sweet spot is if it has one. I’d love to know what it does better than burp, zap and w3af combined? There is also a next generation version which according to the google code repository hasn’t had any work done on it since March 2011, where as the classic version is still receiving fixes. The documentation has always seemed fairly minimalistic also.

In terms of web proxy/interceptors I’ve also used fiddler which relies on the .NET framework and as mono is not installed out of the box on Kali, neither is fiddler.

OWASP Zed Attack Proxy (ZAP)

Which is an OWASP flagship project, so it’s free and open source. Cross platform. It was forked from the Paros Proxy project which is not longer supported. Includes automated, passive, brute force and port scanners. Traditional and AJAX spiders. Can even find unlinked files. Provides fuzzing, port scanning. Can be run without the UI in headless mode and can be accessed via a REST API. Supports Anti CSRF tokens. The Script Console that is one of the add-ons supports any language that JSR (Java Specification Requests) 223 supports. That’s languages such as JavaScript Groovy, Python, Ruby and many more. There is plenty of info on the add-ons here. OWASP also provide directions on how to write your own extensions and they provide some sample templates. Following is the list of current extensions, which can also be managed from within Zap. “Manage Add-ons” menu → Marketplace tab. Select and click “Install Selected”

The idea is to first set Zap up as a proxy for your browser. Fetch some web pages (build history). Zap will create a history of URLs. You then right click the item of interest and click Attack->[one of the spider options], then click the play button and watch the progress bar. which will crawl all the pages you have access to according to your permissions. Then under the Analyse menu → Scan Policy… Setup your scan policy so your only scanning what you want to scan. Then hit Scan to assess your target application. Out of the box, you’ve got many scan options. Zap does a lot for you. I’m really loving this tool OWASP!

As usual with OWASP, zap has a wealth of documentation. If zap doesn’t provide enough out of the box, extend it. OWASP also provide an API for zap.

You can find the user group here (also accessible from the ZAP ‘Online’ menu.), which is good for getting help if the help file (which can also be found via ZAP itself) fails to yeild. There is also a getting started guide which is a work in progress. There is also the ZAP Blog.

FoxyProxy

Although nothing to do with Kali Linux and could possibly be in the IceWeasel add-ons section below, I’ve added it here instead as it really reduces friction with web proxy interception. FoxyProxy is a very handy add-on for both firefox and chromium. Although it seems to have more options for firefox, or at least they are more easily accessible. It allows you to set-up a list of proxies and then switch between them as you need. When I run chromium as a non root user I can’t change the proxy settings once the browser is running. I have to run the following command in order to set the proxy to my intermediary before run time like this:

chromium-browser --temp-profile –proxy-server=localhost:3001

Firefox is a little easier, but neither browsers allow you to build up lists of proxies and then switch them in mid flight. FoxyProxy provides a menu button, so with two clicks you can disable the add-on completely to revert to your previous settings, or select any or your predefined proxies. This is a real time saver.

Vulnerability Scanners

Open Vulnerability Assessment System (OpenVAS)

Forked from the last free version (closed in 2005) of Nessus. OpenVAS plugins are written in the same language that Nessus uses. OpenVAS looks for known misconfigurations and vulnerabilities common in out of date software. In fact it covers the following OWASP Top 10 items:

- No.5 Security Misconfiguration

- No.7 Missing Function Level Access Control (formerly known as “failure to restrict URL access”)

- No.9 Using Components with Known Vulnerabilities.

OpenVAS also has some SQLi and other probes to test application input, but it’s primary purpose is to scan networks of machines with out of date software and bad configurations.

Tests continue to be added. Now currently at 32413 Network Vulnerability Tests (NVTs) details here.

Greenbone Security Desktop (gsd) who’s package is a GUI that uses the Greenbone Security Manager, OpenVAS Manager or any other service that offers the OpenVAS Management Protocol (omp) protocol. Currently at version 1.2.2 and licensed under the GPLv2. The Greenbone Security Assistant (gsad) is currently at version 4.0.0. The Germany government also sponsor OpenVAS.

From the menu: Kali Linux → Vulnerability Analysis → OpenVAS, we have a couple of short-cuts visible. openvas-gsd is actually just the gsd package and openvas-setup which is the set-up script.

Before you run openvas-gsd, you can either:

- Run openvas-setup which will do all the setup which I think is already done on Kali. At the end of this, you will be prompted to add a password for a user to the Admin role. The password you add here is for a new user called “admin” (of course it doesn’t say that, so can be a little confusing as to what the password is for).

- Or you can just run the following command, which is much quicker because you don’t run the set-up procedure:

openvasad -c 'add_user' -n [a new administrative username of your choosing] -r Admin

You’ll be prompted to add a new password. Make sure you remember it.

Check out the man page for further options. For example the -c switch is a shortened –command and it lists a selection of commands you can use.

I think -n is for –name although not listed in the man page. -r switch is –role. Either User or Admin.

The user you’ve just added is used to connect the gsd to the:

- openvasmd (OpenVAS Manager daemon) which listens on port 9390

- openvassd (OpenVAS Scanner daemon) which listens on port 9391

- gsad (Greenbone Security Assistant daemon) which listens on port 9392. This is a web app, which also listens on port 443

- openvasad (OpenVAS Administrator daemon) which listens on 9393

The core functionality is provided by the scanner and the manager. The manager handles and organises scan results. The gsad or assistant connects to the manager and administrator to provide a fully featured user interface. There is also a CLI (omp) but I haven’t been able to get this going on Kali Linux yet. You’ll also find that the previous link has links to all the man pages for OpenVAS. You can read more about the architecture and how the different components fit together.

I’ve also found that sometimes the daemons don’t automatically start when gsd starts. So you have to start them manually.

openvasmd && openvassd && gsad && openvasad

You can also use the web app https://127.0.0.1/omp

Then try logging in to the openvasmd. When your finished with gsd you can kill the running daemons if you like. I like to keep an eye on the listening ports when I’m done to keep things as quite as possible.

Check the ports.

ss -anp

Optional to see the processes running, but not necessary.

ps -e

kill -9 <PID of openvasad> <PID of gsad> <PID of openvassd> <PID of openvasmd>

There are also plenty of options when it comes to the report. This can be output in HTML, PDF, XML, Emailed and quite a few others. The reports are colour coded and you can choose what to have put in them. The vulnerabilities are classified by risk: High, Medium, Low, OpenVAS can take quite a while to scan as it runs so many tests.

This is how to get started with gsd.

Web Vulnerability Scanners

This is the generally accepted criteria of a tool to be considered a Web Application Security Scanner.

SkipFish

A high performance active reconnaissance tool written in C. From the documentation “Multiplexing single-thread, fully asynchronous network I/O and data processing model that eliminates memory management, scheduling, and IPC inefficiencies present in some multi-threaded clients.”. OK. So it’s fast.

which prepares an interactive sitemap by carrying out a recursive crawl and probes based on existing dictionaries or ones you build up yourself. Further details in the documentation linked below.

Doesn’t conform to most of the criteria outlined in the above Web Application Security Scanner criteria.

SkipFish v2.05 is the current version packaged with Kali Linux.

SkipFish v2.10b (released Dec 2012)

Free and you can view the source code. Apache license 2.0

Performs a similar role to w3af.

Project details can be found here.

You can find the tests here.

How do you use it though? This is a good place to start. Instead of reading through the non-existent doc/dictionaries.txt, I think you can do as well by reading through /usr/share/skipfish/dictionaries/README-FIRST.

The other two documentation sources are the man page and skipfish with the -h option.

Web Application Attack and Audit Framework (w3af)

Andres Riancho has created a masterpiece. The main behavior of this application is to assess and identify vulnerabilities in a web application by sending customised HTTP requests. Results can be output in quite a few formats including email. It can also proxy, but burp suite is more focused on this role and does it well.

Can be run with a gui: w3af_gui or from the terminal: w3af_console. Written in Python and Runs on Linux BSD or Mac. Older versions used to work on Windows, but it’s not currently being tested on Windows. Open source on GitHub and released under the GPLv2 license.

You can write your own plug-ins, but check first to make sure it doesn’t already exist. The plugins are listed within the application and on the w3af.org web site along with links to their source code, unit tests and descriptions. If it doesn’t appear that the plug-in you want exists, contact Andres Riancho to make sure, write it and submit a pull request. Also looks like Andres Riancho is driving the development TDD style, which means he’s obviously serious about creating quality software. Well done Andres!

w3af provides the ability to inject your payloads into almost every part of the HTTP request by way of it’s fuzzing engine. Including: query string, POST data, headers, cookie values, content of form files, URL file-names and paths.

There’s a good set of documentation found here and you can watch the training videos. I’m really looking forward to using this in anger.

Nikto

Is a web server scanner that’s not overly stealthy. It’s built on “Rain Forest Puppies” LIbWhisker2 which has a BSD license.

Nikto is free and open source with GPLv3 license. Can be run on any platform that runs a perl interpreter. It’s source can be found here. The first release of Nikto was in December of 2001 and is still under active development. Pull requests encouraged.

Suports SSL. Supports HTTP proxies, so you can see what Nikto is actually sending. Host authentication. Attack encoding. Update local databases and plugins via the -update argument. Checks for server configuration items like multiple index files and HTTP server options. Attempts to identify installed web servers and software.

Looks like the LibWhisker web site no longer exists. Last release of LibWhisker was at the beginning of 2010.

Nikto v2.1.4 (Released Feb 20 2011) is the current version packaged with Kali Linux. Tests for multiple items, including > 6400 potentially dangerous files/CGIs. Outdated versions of > 1200 servers. Insecurities of specific versions of > 270 servers.

Nikto v2.1.5 (released Sep 16 2012) is the latest version. Tests for multiple items, including > 6500 potentially dangerous files/CGIs. Outdated versions of > 1250 servers. Insecurities of specific versions of > 270 servers.

Just spoke with the Kali developers about the old version. They are now building a package of 2.1.5 as I write this. So should be an apt-get update && apt-get upgrade away by the time you read this all going well. Actually I can see it in the repo now. Man those guys are responsive!

Most of the info you will need can be found here.

SQLNinja

sqlninja: Targets Microsoft SQL Servers. Uses SQL injection vulnerabilities on a web app. Focuses on popping remote shells on the target database server and uses them to gain a foothold over the target network. You can set-up graphical access via a VNC server injection. Can upload executables by using HTTP requests via vbscript or debug.exe. Supports direct and reverse bindshell. Quite a few other methods of obtaining access. Documentation here.

Text Editors

- Vim. Shouldn’t need much explanation.

- Leafpad. This is a very basic graphical text editor. A bit like Windows Notepad.

- Gvim. This is the Graphical version of Vim. I’ve mostly used sublime text 2 & 3, gedit on Linux, but Gvim is really quite powerful too.

Note Keeping

- KeepNote. Supported on Linux, Windows and MacOS X. Easy to transport notes by zipping or copying a folder. Notes stored in HTML and XML.

- Zim Desktop Wiki.

Other Notable Features

- Offensive Securities Kali Linux is free and always will be. It’s also completely open (as it’s based on debian) to modification of it’s OS or programmes.

- FHS compliant. That means the file system complies to the Linux Filesystem Hierarchy Standard

- Wireless device support is vast. Including USB devices.

- Forensics Mode. As with BackTrack 5, the Kali ISO also has an option to boot into the forensic mode. No drives are written to (including swap). No drives will be auto mounted upon insertion.

Customising installed Kali

Wireless Card

I had a little trouble with my laptop wireless card not being activated. Turned out to be me just not realising that an external wi-fi switch had to be turned on. I had wireless enabled in the BIOS. The following where the steps I took to resolve it:

Read Kali Linux documentation on Troubleshooting Wireless Drivers and found the card listed with lspci. Opened /var/log/dmesg with vi. Searched for the name of the card:

#From command mode to make search case insensitive:

:set ic

#From command mode to search

/[name of my wireless card]

There were no errors. So ran iwconfig (similar to ifconfig but dedicated to wireless interfaces). I noticed that the card was definitely present and the Tx-Power was off. I then thought I’d give rfkill a spin and it’s output made me realise I must have missed a hardware switch somewhere.

Found the hard switch and turned it on and we now have wireless.

Adding Shortcuts to your Panel

[Alt]+[right click]->[Add to Panel…]

Or if your Kali install is on VirtualBox:

[Windows]+[Alt]+[right click]->[Add to Panel…]

Caching Debian Packages

If you want to:

- save on bandwidth

- have a large number of your packages delivered at your network speed rather than your internet speed

- have several debian based machines on your network

I’d recommend using apt-cacher-ng. If not already, you’ll have to set this up on a server and add the following file to each of your debian based machines.

/etc/apt/apt.conf with the following contents and set it’s permissions to be the same as your sources.list:

Acquire::http::Proxy “http://[ip address of your apt-cacher server]:3142”;

IceWeasel add-ons

- Firebug

- NoScript

- Web Developer

- FoxyProxy (more details mentioned above)

- HackBar. Somewhat useful for (en/de)coding (Base64, Hex, MD5, SHA-(1/256), etc), manipulating and splitting URLs

SQL Inject Me

Nothing to do with Kali Linux, but still a good place to start for running a quick vulnerability assessment. Open source software (GPLv3) from Security Compass Labs. SQL Inject Me is a component of the Exploit-Me suite. Allows you to test all or any number of input fields on all or any of a pages forms. You just fill in the fields with valid data, then test with all the tools attacks or with the top that you’ve defined in the options menu. It then looks for database errors which are rendered into the returned HTML as a result of sending escape strings, so doesn’t cater for blind injection. You can also add remove escape strings and resulting error strings that SQL Inject Me should look for on response. The order in which each escape string can be tried can also be changed. All you need to know can be found here.

XSS Me

Nothing to do with Kali Linux, but still a good place to start for running a quick vulnerability assessment. Open source software (GPLv3) from Security Compass Labs. XSS Me is also a component of the Exploit-Me suite. This tool’s behaviour is very similar to SQL Inject Me (follows the POLA) which makes using the tools very easy. Both these add-ons have next to no learning curve. The level of entry is very low and I think are exactly what web developers that make excuses for not testing their own security need. The other thing is that it helps developers understand how these attacks can be carried out. XSS Me currently only tests for reflected XSS. It doesn’t attempt to compromise the security of the target system. Both XSS Me and SQL Inject Me are reconnaissance tools, where the information is the vulnerabilities found. XSS Me doesn’t support stored XSS or user supplied data from sources such as cookies, links, or HTTP headers. How effective XSS Me is in finding vulnerabilities is also determined by the list of attack strings the tool has available. Out of the box the list of XSS attack strings are derived from RSnakes collection which were donated to OWASP who now maintains it as one of their cheatsheets.. Multiple encodings are not yet supported, but are planned for the future. You can help to keep the collection up to date by submitting new attack strings.

Chromium

Because it’s got great developer tools that I’m used to using. In order to run this under the root account, you’ll need to add the following parameter to /etc/chromium/default between the quotes for CHROMIUM_FLAGS=””

--user-data-dir

I like to install the following extensions: Cookies, ScriptSafe

Terminator

Because I like a more powerful console than the default. Terminator adds split screen on top of multi tabs. If you live at the command line, you owe it to yourself to get the best console you can find. So far terminator still fits this bill for me.

KeePass

The password database app. Because I like passwords to be long, complex, unique for everything and as secure as possible.

Exploits

I was going to go over a few exploits we could carry out with the Kali Linux set-up, but I ran out of time and page space. In fact there are still many tools I wanted to review, but there just isn’t enough time or room in this article. Feel free to subscribe to my blog and you’ll get an update when I make posts. I’d like to extend on this by reviewing more of the tools offered in Kali Linux

This has been one of my pet topics for a while. Why? Because the lack of it is so often abused. In fact this is one of the primary techniques for No.1 (Injection) and No.3 (XSS) of this years OWASP Top 10 List (unchanged from 2010). I’d encourage any serious web developers to look at my Sanitising User Input From Browser. Part 1” and Part 2

Part 1 deals with the client side (untrused) code.

Part 2 deals with the server side (trusted) code.

I provide source code, sources and discuss the following topics:

- Minimising the attack surface

- Defining maximum field lengths (validation)

- Determining a white list of allowable characters (validation)

- Escaping untrusted data

- External libraries, cheat sheets, useful code and sites, I used. Also discuss the less useful resources and why.

- The point of validating client side when the server side is going to do it again anyway

- Full set of server side tests to test the sanitisation is doing what is expected